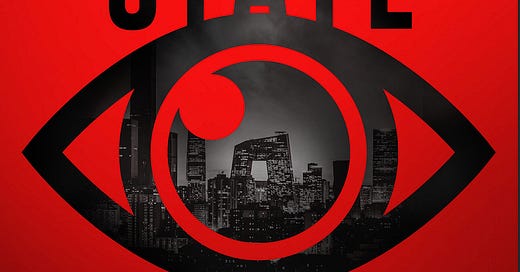

Q&A: Josh Chin and Liza Lin on How China Created a 21st Century Surveillance State

A new book details how much of China's authoritarian technology was made in America

Josh Chin and Liza Lin cover China for the Wall Street Journal. Chin is deputy bureau chief in China based in Seoul and Taiwan, and Lin is a China correspondent based in Singapore.

Together, they are…

Keep reading with a 7-day free trial

Subscribe to Public Sphere to keep reading this post and get 7 days of free access to the full post archives.